Data collection and pretreatment

In this study, in order to ensure the universality and representativeness of the data, more than 1 million financial transaction data were collected through multiple channels. Specifically, we first established cooperative relations with more than 10 financial institutions, and obtained about 600,000 historical transaction records including credit card transactions, electronic bank transfers and various account activities. Secondly, about 300,000 pieces of anonymized data were collected by using the public financial data set platform Kaggle and UCI Machine Learning Repository, which provided valuable information for this study while protecting users’ privacy. Finally, in cooperation with five professional financial data providers, about 100,000 detailed and comprehensive financial market data were obtained, which covered the information of stock trading, bonds, futures and other financial derivatives.

After collecting the original data, strict data cleaning measures are taken to ensure the accuracy and integrity of the data. Including removing duplicate records to eliminate data redundancy, processing missing values and selecting filling, interpolation or deletion methods according to data characteristics and business logic, and using statistical methods and business domain knowledge to detect and process abnormal values, thus reducing the negative impact of noise data on subsequent modeling.

Data preprocessing is a key step before ML model training, aiming at transforming data into a format suitable for model learning. In this study, firstly, the data is standardized or normalized to ensure the consistency of dimensions between different features, thus eliminating the influence of dimensional differences. The unique thermal coding technology is used to encode the features of category data, so that the model can effectively identify and process these data. For the data related to time series, we should pay attention to the reasonable division of time window and extract meaningful features from it in order to capture and utilize the dynamic information in time series data. The data preprocessing results are shown in Table 1 above.

Characteristic engineering

Feature engineering plays a pivotal role in machine learning tasks, as it entails distilling meaningful information from raw data and converting it into a form that the model can interpret and utilize. In this research, the process of feature engineering is bifurcated into two primary stages: feature extraction and feature selection.

The extraction of features constitutes a crucial phase for the triumph of the machine learning model. It necessitates drawing valuable information from the original data and converting it into feature vectors. The basic transaction information, such as amount, time and type, which is directly used when processing financial transaction data, provides intuitive transaction characteristics for the model. Considering that financial fraud may show a specific pattern in time series, this paper analyzes the sequence information before and after each transaction, including the time series characteristics such as transaction interval and amount change. In addition, the user’s behavior pattern is also the key object of investigation, and the outline of user behavior is described by calculating statistics such as transaction frequency, average transaction amount and maximum/minimum transaction amount. Geographical location information is also included in the feature set. If available, trading places and its changing frequency can also provide important geographical dimension features for the model.

Feature selection involves eliminating superfluous and unrelated features to streamline the model and augment its performance. In this investigation, the recursive feature elimination (RFE) technique is employed to iteratively identify the optimal feature subset, contingent on the model’s performance. RFE accomplishes this by recursively evaluating progressively smaller feature sets 21.

The sequence of the RFE algorithm is as follows:

|

Input: training data set \(\:D\), initial feature set F, required feature number k Output: selected feature subset S |

|

\(S=F\)//Initialize the selected feature subset as all features Model = Initialize the base model()\(while~\left| S \right|~>~k~do\) Model. Training \(\left( {D,S} \right)\)//Use the current feature subset S to train the model Importance = model. Get the feature importance ()//Get the importance score of the current feature subset The least important feature = find the feature corresponding to the minimum value of importance \(\:S=S\)– {Least important feature}//Remove the least important feature from the feature subset \(\:end\:while\) Return S |

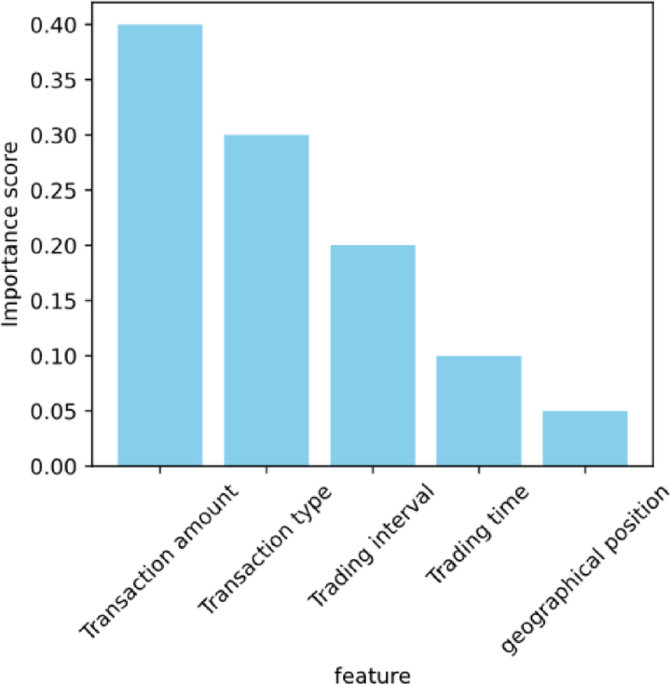

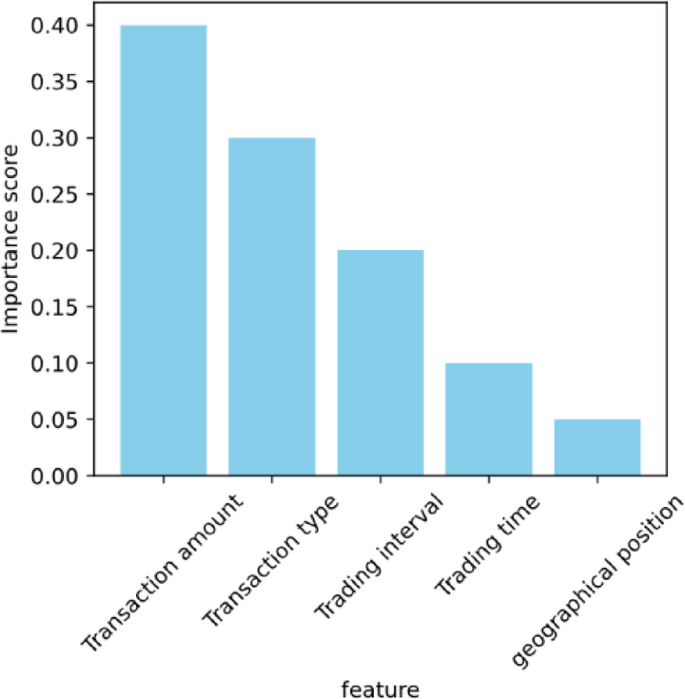

The RFE algorithm is implemented and the importance score of each feature is obtained. The feature selection result is shown in Fig. 1.

Feature selection result.

It can be seen that the characteristics of transaction amount have the highest importance score, which indicates that transaction amount is a very important indicator in the financial fraud detection model. This may be because fraudulent transactions often involve unusually high transactions, or frequent small transactions in a short period of time try to avoid detection. The characteristics of transaction types and transaction intervals also show relatively high scores, indicating that they play an important role in distinguishing normal and fraudulent transactions. Transaction types may reveal that certain types of transactions are more likely to be associated with fraud, and the abnormal pattern of transaction intervals may be a sign that fraudsters are trying to cover up their behavior. The importance scores of transaction time and geographical location characteristics are relatively low, but this does not mean that they are irrelevant. A low score may mean that these features contribute less to the model when used alone, but they can still be used as auxiliary information in combination with other features to improve the overall performance of the model. The results of feature selection also suggest that when detecting financial fraud, we should comprehensively consider the information of multiple dimensions, rather than relying on a single feature. This multi-feature comprehensive analysis is helpful to improve the generalization ability and accuracy of the model.

Model construction

In this study, Stacking ensemble learning algorithm is selected as the main ML model22. Stacking is a powerful integrated learning technology, which constructs a more powerful model by combining the prediction results of multiple basic learners. In Stacking, in the first stage, several different basic learners are used to predict the original data, and in the second stage, a new learner is used to learn how to best combine the prediction results of these basic learners.

Stacking algorithm is chosen mainly because it can significantly improve the prediction performance, reduce the risk of over-fitting and its high flexibility. By effectively integrating the prediction results of multiple base learners, Stacking algorithm not only integrates the advantages of each model and improves the overall prediction performance, but also effectively reduces over-fitting and enhances the generalization ability of the model by integrating multiple models. In addition, the flexibility of Stacking is reflected in its support for various types of base learners, which enables it to effectively identify and utilize different patterns and features in data, thus coping with various complex prediction tasks.

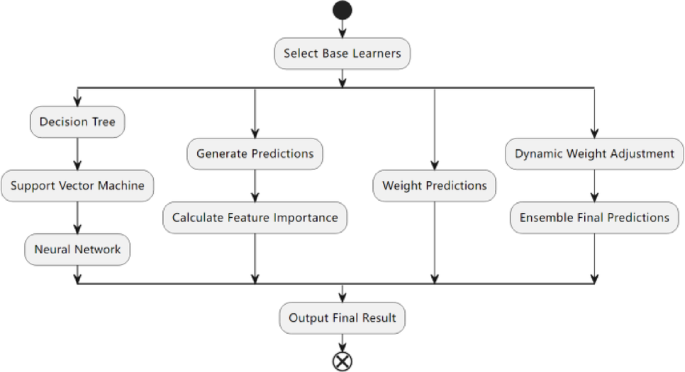

In this study, an innovative integration strategy based on Stacking is explored and designed (Fig. 2). The innovation of this strategy lies in its three key aspects. One is to capture different information in the data by making full use of various types of basic learners, such as DT, SVM, NN, and so on. Secondly, in the second phase of Stacking, a feature importance weighting procedure is incorporated. The prediction outcomes are then adjusted based on the feature importance scores determined by the preliminary learner in the initial phase. This approach enables the model to concentrate more on features that significantly impact the predictions, thereby enhancing the overall precision of the model. Subsequently, a mechanism is devised to dynamically modify the weights of the learners. This mechanism allows for the real-time adjustment of learner weights in accordance with their performance during the training phase, ensuring that superior-performing learners are accorded greater prominence in the integrated results. The holistic design of this integration tactic is geared towards augmenting the predictive efficacy of the model, mitigating the risk of overfitting, and bolstering the model’s resilience to intricate data patterns.

Integration strategy based on stacking.

In the first stage of Stacking, each basic learner will produce a set of predictive values, and the importance score of features can be given according to the internal evaluation of the model. In the second stage, these importance scores are used to weight the prediction results of the base learner.

Suppose there are M base learners, and for the \(j\) base learner, the generated feature importance score is \(\:Importanc{e}_{j}\). Then, in the second stage of Stacking, the prediction result \({P_j}\) of the j-th base learner can be weighted by the following formula:

$$\:{W}_{j}={P}_{j}\times\:Importanc{e}_{j}$$

(1)

Where \(\:{W}_{j}\) is the weighted prediction result. This weighting process ensures that the model pays more attention to the features that have great influence on the prediction results.

In order to dynamically adjust the weights of learners, the weights are assigned according to the performance of each basic learner on the verification set. A common method is to use the prediction accuracy of basic learners or other performance indicators as the basis of weight. It is assumed that there is a verification set in each iteration to evaluate the performance of the base learner. For the \(\:j\)-th base learner, its accuracy on the verification set is \(Accurac{y_j}\). Then, the weight \(\:{W}_{j}\) of the learner can be calculated by the following formula:

$${W_j}=\frac{{Accurac{y_j}}}{{\mathop \sum \nolimits_{{k=1}}^{M} Accurac{y_k}}}$$

(2)

This formula ensures that learners with better performance occupy a larger proportion in the integration results. These weights are used to weight the prediction results of the base learner in the second stage of Stacking.

Model training and optimization

In the model training stage, the original data are cleaned, transformed and standardized to ensure the data quality and meet the input requirements of different basic learners. Then, various types of basic learners, such as DT, SVM, NN, are selected to give full play to their respective learning advantages. These basic learners first predict the original data and generate preliminary prediction results. Then, the importance of each feature is evaluated according to the internal mechanism of the basic learner, and these scores are used to weight the prediction results of the basic learner in the second stage of Stacking to strengthen the influence of key features. Finally, these weighted prediction results are used as a new feature set to train the second-stage meta-learner.

To enhance the model’s performance, we employ grid search technology coupled with a cross-validation approach to fine-tune the crucial parameters of both the base learner and the meta-learner. This involves systematically exploring the parameter space to identify the optimal parameter configuration. Design a mechanism to dynamically adjust the weight of learners. In the process of cross-validation, the weight of each basic learner is dynamically adjusted according to its performance. Learners with better performance will get higher weight, thus playing a greater role in the integration results.

In order to evaluate the generalization ability and performance stability of the model, the data set is divided into training set, verification set and test set according to the proportion of 70%, 15% and 15%. Model training is carried out on the training set, parameter tuning and model selection are carried out through the verification set, and finally the performance of the model is evaluated on the independent test set.

Model evaluation

To thoroughly assess the model’s performance, we utilize multiple evaluation metrics, such as accuracy, recall, and F1 score.

Accuracy denotes the proportion of samples that the model predicts correctly out of the total sample count. This metric offers insights into the model’s overall predictive prowess. The formula to compute accuracy is provided below:

$${{A}}{{c}}{{c}}{{u}}{{r}}{{a}}{{c}}{{y}}=\frac{{{{I}}{{m}}{{p}}{{o}}{{r}}{{t}}{{a}}{{n}}{{c}}{{e}}~{{s}}{{c}}{{o}}{{r}}{{e}}}}{{{{T}}{{o}}{{t}}{{a}}{{l}}~{{s}}{{a}}{{m}}{{p}}{{l}}{{e}}~{{n}}{{u}}{{m}}{{b}}{{e}}{{r}}}}$$

(3)

The recall rate is indicative of the percentage of actual positive instances that the model correctly identifies. It gauges the model’s efficacy in detecting true positives. The formula for calculating the recall rate is presented below:

$${{R}}{{e}}{{c}}{{a}}{{l}}{{l}}=\frac{{{{T}}{{P}}}}{{{{T}}{{P}}+{{F}}{{N}}}}$$

(4)

In the formula, \(\:{T}{P},{F}{N}\) represents true positives and false negatives, respectively.

The F1 score represents the harmonic mean between accuracy and recall, serving as a comprehensive metric to assess the model’s performance. A higher F1 score indicates superior model performance. The formula to compute the F1 score is outlined below:

$${{F}}1~{{S}}{{c}}{{o}}{{r}}{{e}}=2 \times \frac{{{{A}}{{c}}{{c}}{{u}}{{r}}{{a}}{{c}}{{y}} \times {{R}}{{e}}{{c}}{{a}}{{l}}{{l}}}}{{{{A}}{{c}}{{c}}{{u}}{{r}}{{a}}{{c}}{{y}}+{{R}}{{e}}{{c}}{{a}}{{l}}{{l}}}}$$

(5)

In order to choose the best ML model, the performances of several algorithms, including LR, DT, RF and Gradient Boosting Tree (GBT), are studied and compared. Each algorithm is evaluated on the same data set using the above evaluation indicators. By comparing the accuracy, recall and F1 score of each algorithm, the performance differences of different algorithms are found. The parameters of each basic classification model are shown in Table 2.

In this study, the experimental environment configuration includes high-performance hardware and advanced software tools. Specifically, the hardware platform equipped with Intel (R) Core (TM) i7-9700k CPU @ 3.60 GHz processor, 32GB DDR4 RAM and 1 TB SSD storage is studied and used to ensure the high efficiency of data processing and access. At the same time, in order to speed up the training process of NN, NVIDIA GeForce RTX 2080 Ti GPU is equipped. In terms of software, the experimental environment is based on Ubuntu 20.04 LTS operating system, and the algorithm is implemented by Python 3.12 programming language. Data processing and ML tasks mainly depend on NumPy 1.19.5, pandas 1.2.5, scikit-learn 0.24.2 and TensorFlow 2.5.0 library supported by CUDA and cuDNN. In addition, Git 2.33.0 is used for version control in order to effectively manage the code version and the experimental process.

link